In the SSP we have been putting together a small suite of performance tests to use as before/after checks in the SITS upgrade. In the SSP we have a mixture of IS and non-IS staff, so it was important to come up with a procedure which was:

- Easily maintainable

- Accessible to team members who don’t come from a programming background

Selenium was a natural choice, since it is already being used elsewhere in the department, and it has a handy interface which lets you record tests easily.

When we first started using Selenium we were primarily using Selenium IDE (See the original post on our testing process), the test recording tool. We found that this was useful for writing simple cases, but it quickly fell down when trying to write more complex tests. We also found the auto-recorded IDE tests tended to be quite fragile and difficult to maintain. Each test is stored individually in an HTML format, meaning that you can’t use things like shared setup/teardown, global variables, etc. which are familiar to most programmers. It also doesn’t support any common syntax constructs like if/else or loops. This ends up translating into a whole lot of repetition.

To solve these issues, we eventually turned to exporting our original tests out into scripts. We decided to use Python for this, since there was some Python knowledge already in the team, and as a language it is relatively easy to read (even for non-programmers). It also lends itself well to “fiddling” using the IDLE interface. In Python, Selenium is used with the built-in unittest framework. The scripts made it a lot easier to write and maintain tests, but we still had separate scripts (no shared code) for each test.

For this upgrade we had some time to improve the tests. There were a few major issues that needed to be addressed:

- Reducing code repetition (DRY)

- Making tests more robust

- Automating test output

To cut down on the amount of repetition, we followed Eli Bendersky’s method for creating parametrized test cases by extending unittest. This meant that we could use a single main script to set all our test variables (like environment name), and then pass them into each test. We also created a parent class for tests which specifies the setup/teardown. Each individual test then only needs to specify its testing steps. The main script pulls in all the test cases and uses them to build a suite.

One of the problems with using SITS is that it builds pages for you, so often you don’t have control over things like element ids, which are generated automatically. We were able to make our tests much more robust by changing our selectors to only look at custom data attributes set by us. We also used xpaths to create less fragile selectors in certain steps. For example, in one of our tests we wanted to search for a group of students, and then click on the first search result. Instead of telling Selenium to click on a link called “John Doe” (which might change depending on the search criteria), we can use an xpath to tell it to click the first link on the page.

Finally, we wanted to automate our test output into a nice, pretty, human-readable format. To do this we used the matplotlib library to generate graphs of the results. Using markup.py, we then had the script create a simple HTML page to show the graphs.

As a next step, we are interested in exploring more cross browser testing using Selenium Grid. Parametrized test cases make this especially convenient, since you can write a test which takes the browser and OS as parameters. The same test can then be added to a suite multiple times, each using a different browser.

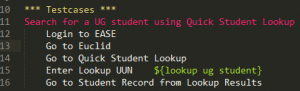

We have also been exploring Robot Framework, a test framework in Python which makes it easy to write simple and extremely human-readable tests. For example, a test which searches for a student might look like this:

Keywords from each test are defined separately, which I’ve found lends itself well to DRY since it makes it really easy to re-use the same steps in different tests. It also generates a nice little report page for you which shows you the status of all your tests and gives you all sorts of useful info on them (e.g. when they were last run, how long they took, any documentation, etc.) A useful tool for anyone looking to implement accessible testing for their teams.